Wikipedia

Redesign

My senior year at RISD I took on redesigning Wikipedia for greater contribution and accuracy.

My initial assumptions were that the lack of contribution was due to the cumbersome system users are thrown into during the editing process. And while that was some of the issue, the general feeling from many users was that they didn't know enough about any topic to make a contribution, or that contributions needed to be substantial.

A good portion of the project ended up being user and general research. As a bench mark for usability, I had users think-aloud as they accomplished a series of tasks, using Vimeo, Facebook, and Flickr. With this baseline I then had the users attempt very similar tasks on Wikipedia. While almost all of the users were able to accomplish every one of the tasks on the other sites, only one of the tested users was able to accomplish any of the tasks on Wikipedia.

The issue of accuracy came down to one simple rule: the chances of content being spam was inversely proportional to the amount of its peer review.

Microcontributions

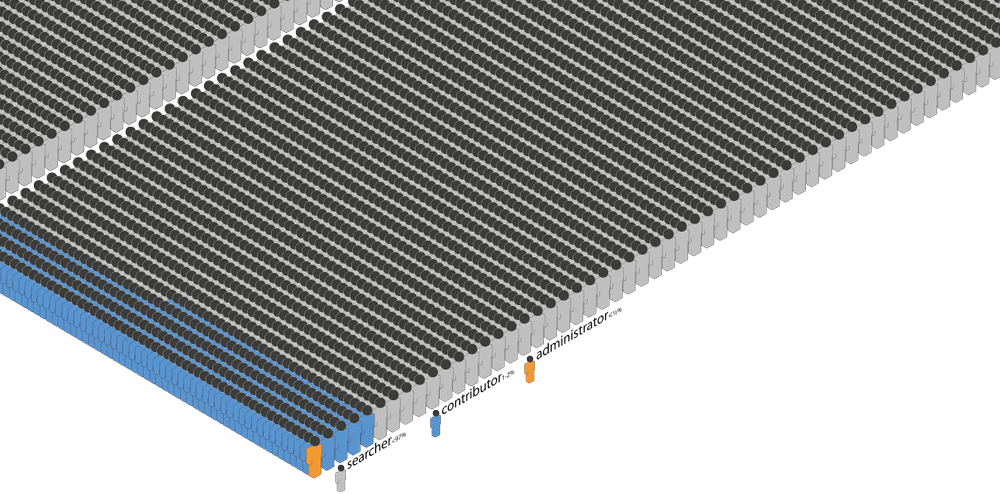

Wikipedia makes good use of bots to reduce the obvious spam edits, but users inserting incorrect information (accidentally or maliciously) is much harder to detect. Peer review is the only way to properly combat this.

While administrators of pages may review specific edits, they make up less than one in 32K users.

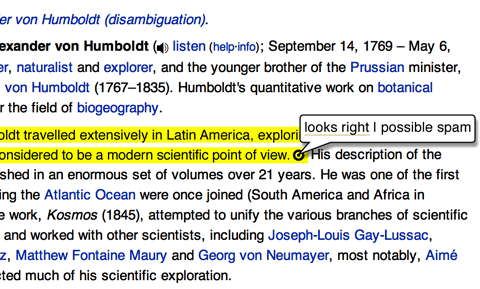

Using microcontributions to speed up the peer review process can be achieved as simply as highlighting new content, and asking users if it looks correct. This also acts as a means to show users that the articles are in constant flux.

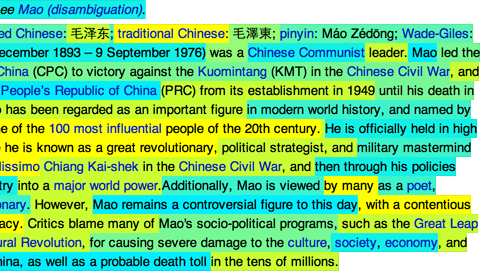

The living article

Users tested understood that articles could be edited by anyone, but were generally unaware just how often pages were being changed, and thought the content on older pages was basically static. However, the average general topic article is edited over five times a day, with popular articles or new topics being edited dozens or hundreds of times a day.

Color coding the content based on age allows you to see just how living the articles really are.